- You can get an iPhone 16 for free with this Boost Mobile deal - no trade-in required

- Tata Communications recognised as a Leader in the 2025 Gartner® Magic Quadrant™ for Global WAN Services for 12 consecutive years

- Conducting Background Checks in the Corporate Security Environment

- Cisco U. Theater: Where Innovation Meets Learning - Cisco Live

- Your Guide to Cisco APIs at Cisco Live 2025: Empowering IT Teams in the DevNet Zone

Introducing the Coalition for Secure AI (CoSAI)

Today, I am delighted to share the launch of the Coalition for Secure AI (CoSAI). CoSAI is an alliance of industry leaders, researchers, and developers dedicated to enhancing the security of AI implementations. CoSAI operates under the auspices of OASIS Open, the international standards and open-source consortium.

CoSAI’s founding members include industry leaders such as OpenAI, Anthropic, Amazon, Cisco, Cohere, GenLab, Google, IBM, Intel, Microsoft, Nvidia, Wiz, Chainguard, and PayPal. Together, our goal is to create a future where technology is not only cutting-edge but also secure-by-default.

CoSAI’s Scope & Relationship to Other Projects

CoSAI complements existing AI initiatives by focusing on how to integrate and leverage AI securely across organizations of all sizes and throughout all phases of development and usage. CoSAI collaborates with NIST, Open-Source Security Foundation (OpenSSF), and other stakeholders through collaborative AI security research, best practice sharing, and joint open-source initiatives.

CoSAI’s scope includes securely building, deploying, and operating AI systems to mitigate AI-specific security risks such as model manipulation, model theft, data poisoning, prompt injection, and confidential data extraction. We must equip practitioners with integrated security solutions, enabling them to leverage state-of-the-art AI controls without needing to become experts in every facet of AI security.

Where possible, CoSAI will collaborate with other organizations driving technical advancements in responsible and secure AI, including the Frontier Model Forum, Partnership on AI, OpenSSF, and ML Commons. Members, such as Google with its Secure AI Framework (SAIF), may contribute existing work in terms of thought leadership, research, best practices, projects, or open-source tools to enhance the partner ecosystem.

Collective Efforts in Secure AI

Securing AI remains a fragmented effort, with developers, implementors, and users often facing inconsistent and siloed guidelines. Assessing and mitigating AI-specific risks without clear best practices and standardized approaches is a challenge, even for the most experienced organizations.

Security requires collective action, and the best way to secure AI is with AI. To participate safely in the digital ecosystem — and secure it for everyone — individuals, developers, and companies alike need to adopt common security standards and best practices. AI is no exception.

Objectives of CoSAI

The following are the objectives of CoSAI.

Key Workstreams

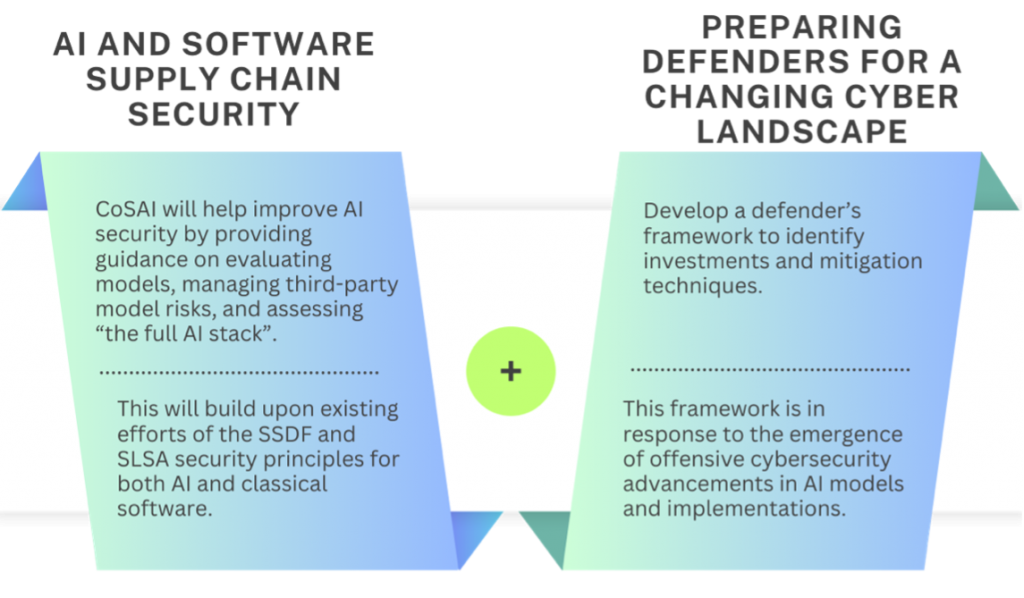

CoSAI will collaborate with industry and academia to address key AI security issues. Our initial workstreams include AI and software supply chain security and preparing defenders for a changing cyber landscape.

CoSAI’s diverse stakeholders from leading tech companies invest in AI security research, shares security expertise and best practices, and builds technical open-source solutions and methodologies for secure AI development and deployment.

CoSAI is moving forward to create a safer AI ecosystem, building trust in AI technologies and ensuring their secure integration across all organizations. The security challenges arising from AI are complicated and dynamic. We are confident that this coalition of technology leaders is well-positioned to make a significant impact in enhancing the security of AI implementations.

We’d love to hear what you think. Ask a Question, Comment Below, and Stay Connected with Cisco Security on social!

Cisco Security Social Channels

Instagram

Facebook

Twitter

LinkedIn

Share: