- I recommend the Pixel 9 to most people looking to upgrade - especially while it's $250 off

- Google's viral research assistant just got its own app - here's how it can help you

- Sony will give you a free 55-inch 4K TV right now - but this is the last day to qualify

- I've used virtually every Linux distro, but this one has a fresh perspective

- The 7 gadgets I never travel without (and why they make such a big difference)

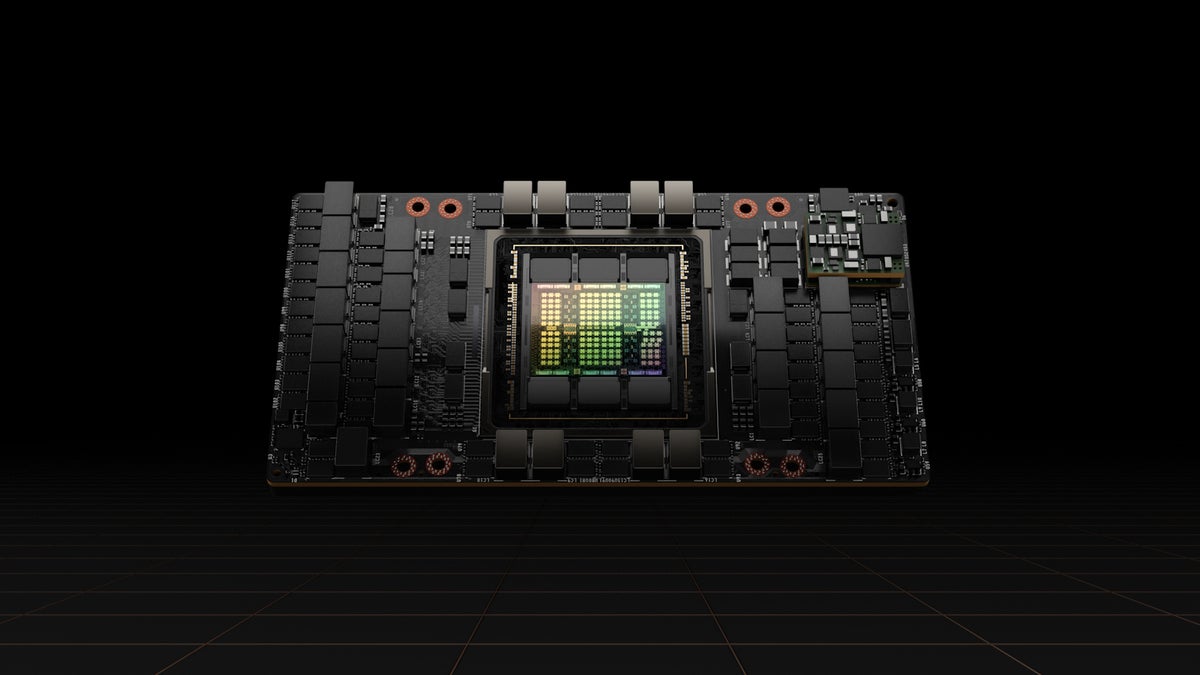

Nvidia unveils a new GPU architecture designed for AI data centers

While the rest of the computing industry struggles to get to one exaflop of computing, Nvidia is about to blow past everyone with an 18-exaflop supercomputer powered by a new GPU architecture.

The H100 GPU, has 80 billion transistors (the previous generation, Ampere, had 54 billion) with nearly 5TB/s of external connectivity and support for PCIe Gen5, as well as High Bandwidth Memory 3 (HBM3), enabling 3TB/s of memory bandwidth, the company says. Due out in the third quarter, it’s the first in a new family of GPUs named Hopper after Admiral Grace Hopper who created COBOL and coined the term computer bug.

This GPU is meant to power data centers designed to handle heavy AI workloads, and Nvidia claims that 20 of them could sustain the equivalent of the entire world’s Internet traffic.

Hopper also comes with the second generation of Nvidia’s Secure Multi-Instance GPU (MIG) technology, allowing a single GPU to be partitioned to support security in multi-tenant uses. “H100 can natively host seven cloud tenants fully isolated with I/O virtualization and independently secured with confidential computing capabilities each instance. Now this computing power can be securely divided between different users and cloud tenants,” said, Paresh Kharya, Nvidia’s senior director of Data Center Computing. That’s seven times the MIG capabilities of the previous generation, he said.

New to the H100 is a function called confidential computing, which protects AI models and customer data while they are being processed. Kharya noted that sensitive data is often encrypted at rest but while it’s in motion it is often unprotected. Confidential computing protects the data while in use, he said.

Hopper also has the fourth-generation NVLink, Nvidia’s high-speed interconnect technology. Combined with a new external NVLink Switch, the new NVlink can connect up to 256 H100 GPUs at nine times higher bandwidth versus the previous generation.

Finally, Hopper adds new DPX instructions to accelerate dynamic programming, the practice of breaking down problems with combinatorial complexity to simpler subproblems. It is employed in a wide range of algorithms that are used in genomics and graph optimizations. Hopper’s DPx instructions will accelerate dynamic programming by seven times, Kharya said.

Promise of the fastest supercomputer

Pieced together, this technology will be used to create Nvidia DGX H100 systems, 5U rack-mounted units, the building block for powerful DGX SuperPOD supercomputers.

Kharya said the new DGX H100 would offer 32 petaflops of AI performance, six times more than DGX A100 currently on the market. And when combined with the NVLink switch system would create a 32 node DGX SuperPOD that will offer one exaflop of AI performance. It will also offer a bisection bandwidth of 70 terabytes per second, 11 times higher than the DGX A100 SuperPOD.

To show off the H100 capabilities, Nvidia is building a supercomputer called Eos with 18 DGX H100 SuperPODs that have 4,600 H100 GPUs joined by NVLink 4 and InfiniBand switches, for a total of 18 exaflops of AI performance. To put that in perspective, according to the most recent Top500 list of supercomputers, the peak performance of the fastest supercomputer, Fugaku, reaches half an exaflop; Nvidia is promising to go 36 times faster than that.

Eos will provide bare-metal performance with multi-tenant isolation, as well as performance isolation to ensure that one application does not impact any other, said Kharya.

“Eos will be used by our AI research teams, as well as by a numerous other software engineers and teams who are creating our products, including autonomous vehicle platform and conversational AI software,” he said.

Nvidia did not offer a timeline for when Eos would be deployed. DGX H100 PODs and SuperPODs are expected later this year.

Copyright © 2022 IDG Communications, Inc.