- Netflix is cutting off older Fire TV devices in a few days - how to see if you're affected

- Why the argument for WFH could get a big boost from AI

- Oversharing online? 5 ways it makes you an easy target for cybercriminals

- Operational and Financial Benefits of Energy Utility Network Digitization

- VMware customers in Europe face up to 1,500% price increases under Broadcom ownership

UCS X-Series- The Future of Computing – Part 2 of 3 – Cisco Blogs

As we move to widescale deployment, we believe that UCS X-Series architectural message is resonating extremely well as deployment feedback has been fantastic.

In the first post part of the blog series, we discussed how heterogeneous computing is causing paradigm shift in computing shaping UCS X-Series architecture. In this blog we will discuss the electromechanical aspects of the UCS X-Series architecture.

Formfactor remains constant for a life cycle of the product hence electromechanical aspects that shape the enclosure design are very critical in design phase.

Electro-mechanical Anchors

Some of the anchors are:

- Socket density per ‘RU”.

- Memory density

- Backplane less IO

- Mezzanine options

- Volumetric space for logic

- Power footprint & delivery

- Airflow (Measured as CFM) for cooling

Socket and memory density is very important when comparing different vendor’s product and in general is an indication of a how efficiently the platform mechanical has been designed in a given “RU” envelop. The ratio of volumetric space required for mechanical integrity vs logic is another important criteria. These criterion helped us to zero down to 7RU as chassis height & at the same time offering more volumetric space available for logic compared to the similar “RU” design from industry.

Previous generation of compute platforms relied on Backplane for connectivity. UCS X-Series, does not use backplane but direct connect IO Interconnects. As the IO technology advances , nodes and the IO Interconnect can be upgraded seamlessly as the elements that needs to be changed are modular and not fixed on backplane. As the IO Interconnect speed increases its reach decreases making it harder and harder to scale in electrical domain. UCS X-Series has been designed with hybrid connector approach that supports electrical IO by default and be ready for optical IO in future. This optical IO option is optimized for intra chassis connectivity. Direct connect IO without a backplane helps to reduce the airflow resistance and helps to remove the heat from inlet to outlet efficiently.

Power distribution

Rack power density per “RU” is hitting 1KW and soon will go beyond that. Majority of the existing server design uses well established 12V distribution to simplify down conversion for CPU voltages. However as current density increases using 12V distribution would add to the connector costs, PCB layer count and routing challenges. UCS X-Series, seeing the need for next generation of server power requirements chose to use higher voltage distribution of 54V instead of 12V. Higher voltage distribution reduces current density by 4.5 times and ohmic losses by 20 times lower compared to 12V. Moving from 12V to 54V DC output helps in simplifying main PSU design and makes onboard power distribution more efficient.

Server Power Consumption

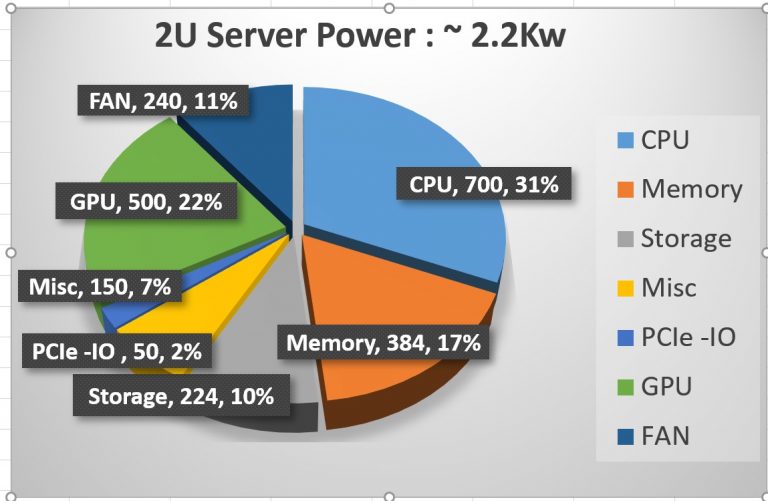

We are seeing CPU TDP (Thermal Design Power) increasing by 75-100W at a 2 year cadence level. Compute nodes will soon start seeing 350W per socket and they need to be ready for 500W+ by 2024. A 2 Socket server with GPU and storage requires close to 2.2KW power not accounting for any distribution losses. To cool this 2 Socket server, fan modules alone will consume around 240W , 11 % of total power. Factoring distribution efficiencies at each intermediate stage of conversion from AC input we are looking at around 2.4KW power draw. So, in a RACK servers with 20 x 2RU servers , Fan power alone will consume 4800W !!. Modular blade platform like UCS X-Series with its centralized cooling and bigger fans, offer much higher CFM’s at a lower power consumption. However fan power consumption is indeed becoming substantial portion of the total power budget.

Cooling

Advances in semiconductor and magnetics allows us to provide more power in the life time of chassis. However, it is difficult to pull off a dramatic upgrade on airflow ( measured as fan CFM) as technological advances are slow & incremental. Additionally, cost economics dictates use of passive heat transfer techniques to cool the CPU in Server. This makes defining fan CFM requirements for cooling the compute nodes a multi variable problem.

Unlike standard rack design which uses spread core CPU placement, UCS X-Series uses shadow core design principle complicating cooling even further.

Banks of U2/E3 storage drives with power upwards of 25W each and accelerators on front side of the blade will restrict air going to the CPU as well as it will pre-heat air.

UCS X-Series design approached these challenges holistically. First and foremost is the development of the state of the art fan module delivering the class leading CFM. The other being the dynamic power management coupled with fan control algorithm that will adapt & evolve as cooling demand grows and ebbs. Compute nodes are designed with high and low CFM paths channeling appropriate airflow for cooling. Additionally, power management options provides customer with configurable knobs to optimize for fan power or high performance mode.

Emergence of Alternate Cooling Technologies

Spot cooling of CPU/GPU at 350W is approaching limits of air-cooling Doubling airflow results in 30% more cooling but it would add 6-8 times more fan power with diminishing return.

Data centers are not yet ready for liquid cooling on wholesale basis. Immersion cooling requires complete overhaul of the RACK. Hyperscalers will lead early adoption cycle and eventually Enterprises customers will get there but the tipping point from air to liquid cooling is still unknown. Air cooling is not going away as we still need to cool memory, storage and other components which are operationally difficult for liquid cooling. We need to collect more data and answer following critical questions before liquid cooling becomes attractive.

- Do we really need liquid cooling for all RACKs or only few RACKs which hosts high TDP servers.

- Is liquid cooling more for green field deployments as a means to reduce fan power/acoustics than for high-TDP CPU/GPU enablement?

- Any Compliance/mandates that targets energy reduction by certain dates in data center?

- TCO analysis of fan power saving vs the total cost of liquid cooling deployment?

- Is customer OK to spend more on fan power cooling than retrofitting the infrastructure for liquid-cooling?

- Is liquid cooling going to help deploy more high TDP servers without upgrading power to the RACK?

- For ex: Saving 100W per 1U in fan power translates to 3.6KW (36x 1U server) additional available power

UCS X-Series however does support a hybrid model – a combination of air/liquid cooling when air cooling alone is not sufficient. Watch out for more details in upcoming blogs on liquid cooling in UCS X-Series.

In the next blog, we will elaborate on trends drove the UCS X-Series internal architecture.

Resources

UCS X-Series – The Future of Computing Blog Series – Part 1 of 3

Share: